On this page

On March 2, 2026, Microsoft published an advisory on OAuth redirection abuse enabling phishing and malware delivery. Microsoft described phishing-led campaigns where attackers register OAuth apps with attacker-controlled redirect URIs, then send legitimate-looking Microsoft login links that intentionally drive the browser into an authorization error path and bounce victims to attacker infrastructure.

This isn’t credential theft or classic token theft. The user still touches real Microsoft infrastructure, but the attacker wins when Entra ID redirects the browser to the app’s registered URI, which points to a phishing page, malware dropper, or relay endpoint.

This post walks through building detection and hardening for this technique using Microsoft Sentinel and Entra ID Conditional Access.

Hands-on Lab: All KQL queries, PowerShell scripts, and deployment automation are in the companion lab.

How the Attack Works

The OAuth redirect abuse pattern exploits how Entra ID handles authentication errors and consent flows. As documented in RFC 9700 Section 4.11.2 (“Authorization Server as Open Redirector”), attackers can deliberately trigger OAuth errors to force redirects through the authorization server.

- App Registration — Attacker registers an OAuth app and sets the redirect URI to an attacker-controlled domain (

powerappsportals.com,github.io,surge.sh, andgitlab.iowere cited by Microsoft) - Phishing Link — Victim receives a link that initiates an OAuth authorization flow with parameters designed to fail at the authorization step, such as

prompt=nonecombined with an invalid or unapproved request - Authorization Error — Entra ID reaches an authorization error state such as

interaction_requiredoraccess_denied - Error Redirect — Per the OAuth 2.0 spec, Entra ID redirects the victim’s browser to the app’s registered

redirect_uriwith error parameters appended - Malicious Landing — The victim lands on the attacker’s page, which auto-downloads a ZIP containing LNK files and HTML smuggling loaders, or redirects to an AiTM phishing framework like EvilProxy

- Data Exfiltration — The

stateparameter is repurposed to carry the victim’s email address (encoded via Base64, hex, or custom schemes), so it auto-populates on the phishing page

The key insight: the redirect itself is the win. Microsoft noted the sign-in can fail and still hand the attacker a phishing or malware-delivery opportunity because the browser lands on a malicious page after touching legitimate Microsoft infrastructure.

Why This Works

- The URL starts with

login.microsoftonline.com— it looks legitimate to users and URL filters - In the observed Entra flow,

prompt=nonesuppresses the normal consent UI and drives the request down the error path - Even security-aware users who would decline consent still get redirected because the error itself triggers the redirect

- The redirect URI can point to any domain registered in the app —

github.io,netlify.app, or free hosting services - Microsoft’s advisory confirmed multiple threat actors targeting government and public-sector organizations

Which Error Outcomes Matter Most?

Microsoft’s write-up and the OAuth authorization-code flow docs give us two high-confidence Entra hunt signals:

AADSTS65001/interaction_required— common when silent auth cannot complete because the app or requested permissions do not already have the required consentAADSTS65004/access_denied— common when a user explicitly declines consent

| Error code | Common meaning in this pattern | Hunt value |

|---|---|---|

AADSTS65001 | Silent auth fails because prior consent is missing or interaction is required | High-confidence redirect-abuse signal |

AADSTS65004 | User explicitly declines consent | High-confidence consent-phishing signal |

AADSTS70011 | Invalid scope or malformed OAuth request | Supporting context only |

AADSTS700016 | App not found in tenant | Supporting context only |

AADSTS70000 | Invalid grant or broken authorization flow | Supporting context only |

AADSTS7000218 | Missing client assertion / client auth issue | Supporting context only |

Other OAuth failures such as 70011, 700016, 70000, and 7000218 can still show up while attackers probe or misconfigure the flow, but Microsoft does not document one universal redirect behavior for those numeric codes across every endpoint and flow. Treat them as supporting context, not proof that a browser redirect occurred.

Detection implication: Seeing a burst of 65001 or 65004 errors against a single unfamiliar app is the strongest Entra-native signal. Broader OAuth error clusters are still worth triaging, but they need app registration and consent context.

Detection Strategy

We need detection at two layers:

- Proactive — Find risky OAuth app registrations before they’re weaponized

- Reactive — Detect active abuse patterns in sign-in and audit logs

MITRE ATT&CK Mapping

| Technique | ID | Detection |

|---|---|---|

| Spearphishing Link | T1566.002 | Rules 1, 3, 4 |

| Account Manipulation | T1098 | Rule 2 |

| User Execution: Malicious Link | T1204.001 | Rule 3 |

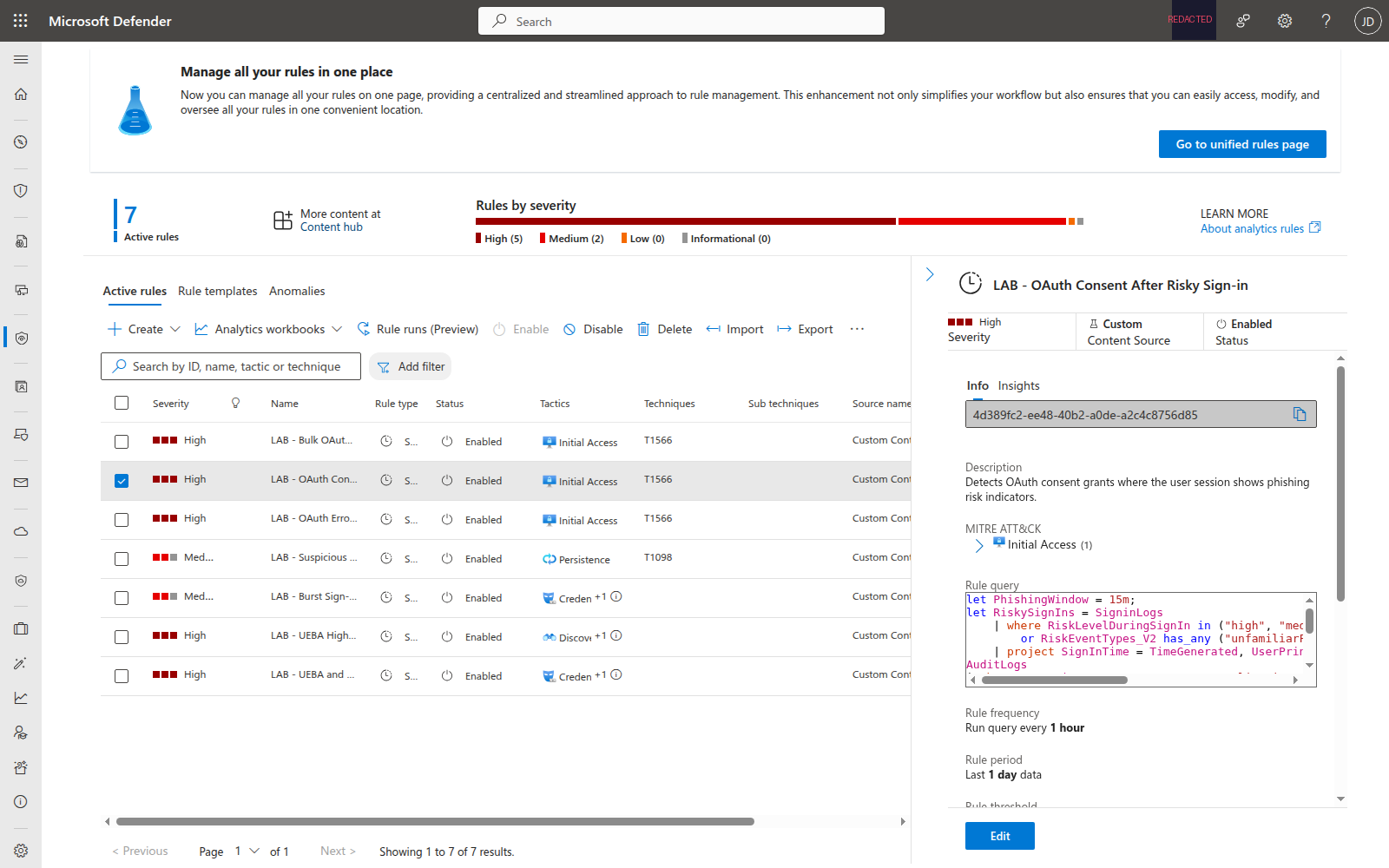

Sentinel Analytics Rules

Four scheduled analytics rules detect the core abuse patterns. Each runs hourly against the last 24 hours of data.

Rule 1: OAuth Consent After Risky Sign-in

Correlates SigninLogs risk indicators with AuditLogs consent events. If a user’s sign-in session shows phishing risk (unfamiliar features, anonymized IP, malicious IP, suspicious IP, malware-infected IP, or suspicious browser) and they grant OAuth consent within 15 minutes, something is wrong.

let PhishingWindow = 15m;

let RiskySignIns = SigninLogs

| where RiskLevelDuringSignIn in ("high", "medium")

or RiskEventTypes_V2 has_any (

"unfamiliarFeatures", "anonymizedIPAddress",

"maliciousIPAddress", "suspiciousIPAddress",

"malwareInfectedIPAddress", "suspiciousBrowser")

| project SignInTime = TimeGenerated,

UserPrincipalName, IPAddress,

RiskLevelDuringSignIn, RiskEventTypes_V2;

AuditLogs

| where OperationName == "Consent to application"

| extend ConsentUser = tostring(InitiatedBy.user.userPrincipalName)

| extend AppDisplayName = tostring(TargetResources[0].displayName)

| join kind=inner (RiskySignIns)

on $left.ConsentUser == $right.UserPrincipalName

| where TimeGenerated between (SignInTime .. (SignInTime + PhishingWindow))

| project TimeGenerated, UserPrincipalName = ConsentUser,

AppDisplayName, RiskLevel = RiskLevelDuringSignIn, SourceIP = IPAddress

Why this matters: Legitimate consent grants don’t happen during risky sessions. If Identity Protection flags the sign-in and the user grants consent, you’re likely looking at a consent phishing attack.

Rule 2: Suspicious OAuth Redirect URI Registered

Watches for app registrations or updates that add redirect URIs pointing to free hosting, tunneling services, URL shorteners, or non-HTTPS endpoints.

let SuspiciousDomains = dynamic([

// Tunneling services

"ngrok.io", "ngrok-free.app", "trycloudflare.com",

"serveo.net", "localtunnel.me",

// Free hosting / PaaS

"workers.dev", "pages.dev", "herokuapp.com",

"netlify.app", "vercel.app", "github.io",

"gitlab.io", "surge.sh", "glitch.me", "replit.dev",

"powerappsportals.com",

// Webhook / request capture

"webhook.site", "requestbin.com", "pipedream.com",

// URL shorteners

"bit.ly", "tinyurl.com", "t.co", "rebrand.ly"]);

AuditLogs

| where OperationName in ("Add application", "Update application")

| mv-expand ModifiedProperty = TargetResources[0].modifiedProperties

| where ModifiedProperty.displayName == "AppAddress"

| extend NewRedirectUris = tostring(ModifiedProperty.newValue)

| extend InitiatedBy_ = coalesce(

tostring(InitiatedBy.user.userPrincipalName),

tostring(InitiatedBy.app.displayName))

| extend AppName = tostring(TargetResources[0].displayName)

| where NewRedirectUris has_any (SuspiciousDomains)

or NewRedirectUris has "http://"

| project TimeGenerated, AppName, NewRedirectUris, InitiatedBy_

Tuning tip: Add your organization’s legitimate development domains to an exclusion list. Developers using ngrok for local testing will generate false positives — but you should know about those too.

Rule 3: OAuth Error-Based Redirect Pattern

Detects sign-in attempts that result in the Entra errors most closely associated with redirect abuse. The strongest signals are AADSTS65001 and AADSTS65004. The rule also carries a short list of secondary OAuth failures that often appear when attackers or broken apps probe the same flow.

SigninLogs

| where ResultType in (

"65001", // User hasn't consented (prompt=none attack vector)

"65004", // User declined consent

"70011", // Invalid scope or other OAuth parameter issue

"700016", // App not found in tenant

"70000", // Invalid grant

"7000218", // Missing client assertion

"AADSTS65001", "AADSTS65004",

"AADSTS70011", "AADSTS700016")

| where AppDisplayName !in (

"Microsoft Office", "Azure Portal",

"Microsoft Teams", "Outlook Mobile")

| summarize ErrorCount = count(),

DistinctUsers = dcount(UserPrincipalName),

Users = make_set(UserPrincipalName, 10),

ErrorCodes = make_set(ResultType),

IPs = make_set(IPAddress, 10)

by AppDisplayName, AppId, bin(TimeGenerated, 1h)

| where ErrorCount > 3 or DistinctUsers > 2

| project TimeGenerated, AppDisplayName, AppId,

ErrorCount, DistinctUsers, Users, ErrorCodes, IPs

Why we include non-redirect error codes: 65001 and 65004 are the high-confidence redirect-abuse signals. The additional OAuth failures in the rule are secondary context that can strengthen a case when they cluster around the same app and time window.

Rule 4: Bulk OAuth Consent to Single App

When 3+ users consent to the same app within an hour, it strongly indicates a phishing campaign pushing users to authorize a malicious application.

AuditLogs

| where OperationName == "Consent to application"

| extend ConsentUser = tostring(InitiatedBy.user.userPrincipalName)

| extend AppName = tostring(TargetResources[0].displayName)

| extend AppId = tostring(TargetResources[0].id)

| summarize ConsentCount = count(),

ConsentUsers = make_set(ConsentUser, 20),

FirstConsent = min(TimeGenerated),

LastConsent = max(TimeGenerated)

by AppName, AppId, bin(TimeGenerated, 1h)

| where ConsentCount >= 3

| project TimeGenerated, AppName, AppId,

ConsentCount, ConsentUsers

Hunting Queries

Beyond automated detection, five hunting queries support proactive threat hunting:

- Enumerate All OAuth Apps with Delegated Permissions — Baseline audit of every app with user-granted permissions over the last 90 days

- OAuth Sign-ins from Non-Corporate IPs — Find OAuth app authentications from unexpected locations (customize the corporate IP ranges)

- Recently Registered Apps with High-Privilege Permissions — Apps created in the last 14 days requesting

Mail.Read,Files.ReadWrite.All,Directory.ReadWrite.All, etc. - OAuth Redirect URI Inventory — Full audit trail of redirect URI changes across all app registrations

- Token Replay After OAuth Redirect Error — Detect the pattern where an OAuth

65001error redirect is followed by a successful token acquisition from a different IP within 30 minutes — the signature of a token relay attack

The full KQL for all five hunting queries is in the companion lab. Import them into Sentinel Hunting > Queries to run proactive hunts against your OAuth telemetry.

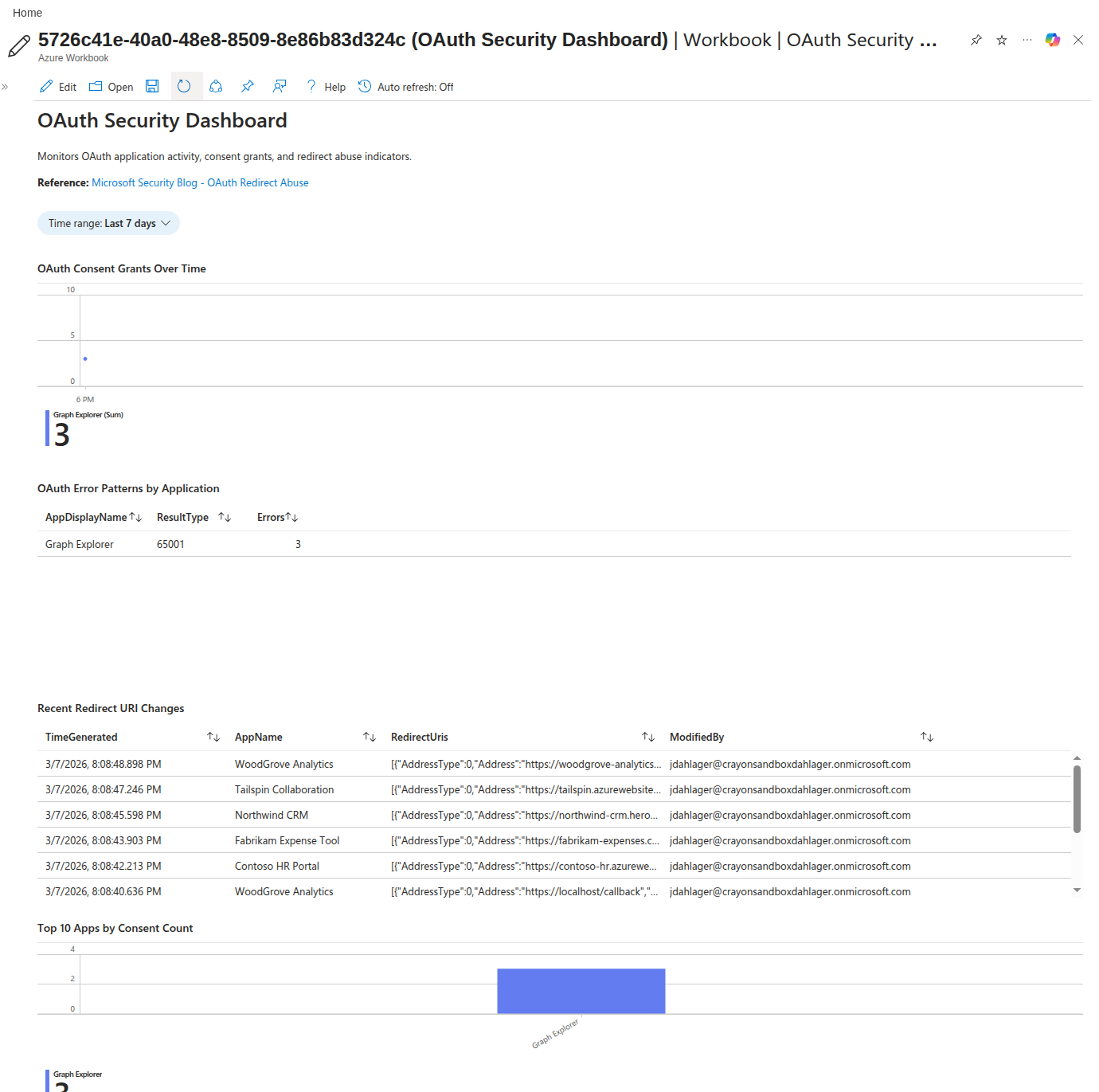

OAuth Security Workbook

The lab deploys an Azure Workbook that provides a single-pane view of OAuth activity across four panels:

- Consent Grants Over Time — Timechart of OAuth consent events by application, showing spikes that indicate bulk consent campaigns

- OAuth Error Patterns by Application — Table of primary redirect-abuse indicators (

65001,65004) plus related OAuth failures grouped by app and error code - Recent Redirect URI Changes — Audit trail of redirect URI modifications across all app registrations

- Top 10 Apps by Consent Count — Bar chart highlighting apps with the most user consents, surfacing outliers

The workbook uses the same KQL patterns as the analytics rules, giving SOC analysts a dashboard to investigate alerts in context. The time range parameter defaults to 7 days but can be adjusted for broader investigations.

Entra ID Hardening

Detection alone isn’t enough. The lab includes hardening scripts that reduce the attack surface:

Restrict User Consent

The most impactful control: restrict which apps users can consent to.

$authPolicy = az rest --method GET `

--url 'https://graph.microsoft.com/v1.0/policies/authorizationPolicy' `

| ConvertFrom-Json

$currentPolicies = @($authPolicy.defaultUserRolePermissions.permissionGrantPoliciesAssigned)

$updatedPolicies = @(

$currentPolicies | Where-Object { $_ -like 'managePermissionGrantsForOwnedResource.*' }

)

$updatedPolicies += 'managePermissionGrantsForSelf.microsoft-user-default-low'

$updatedPolicies = $updatedPolicies | Select-Object -Unique

$body = @{

defaultUserRolePermissions = @{

permissionGrantPoliciesAssigned = $updatedPolicies

}

} | ConvertTo-Json -Depth 5

az rest --method PATCH `

--url 'https://graph.microsoft.com/v1.0/policies/authorizationPolicy' `

--body $body --headers 'Content-Type=application/json'

This policy blocks most user-driven consent phishing and materially reduces risky third-party app approvals. It does not revoke existing grants, and redirect-only lures can still succeed if the attacker only needs the browser bounce.

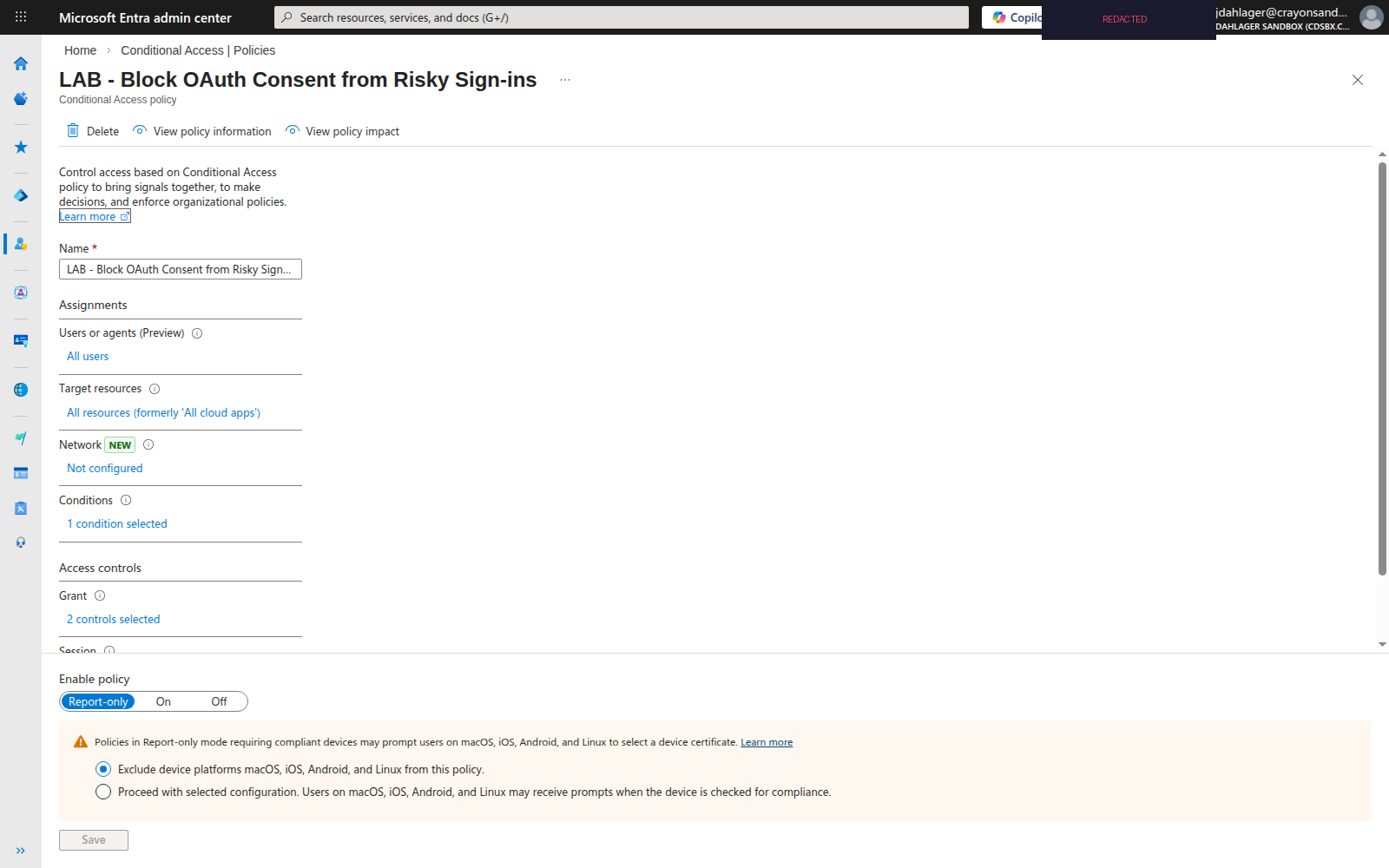

Conditional Access Policy

A CA policy adds step-up authentication to risky OAuth-related sign-ins:

- Applies to: All users

- Conditions: Sign-in risk = High or Medium

- Grant controls: Require MFA

- Session controls: Sign-in frequency = Every time

The policy deploys in report-only mode. Run it for 7 days, review the CA insights workbook for impact, exclude emergency accounts before enforcement, then switch it on.

OAuth App Audit

The Audit-OAuthApps.ps1 script enumerates all app registrations and service principals via Microsoft Graph to flag:

- Apps with redirect URIs pointing to

ngrok.io,herokuapp.com,workers.dev, etc. - Apps with non-HTTPS redirect URIs (excluding localhost)

- Apps with high-privilege delegated permissions (

Mail.Read,Files.ReadWrite.All,Directory.ReadWrite.All) - User-consented permissions (vs admin-consented)

- Multi-tenant apps registered in your tenant

The audit outputs a CSV with risk scores, sorted by severity. Run it weekly.

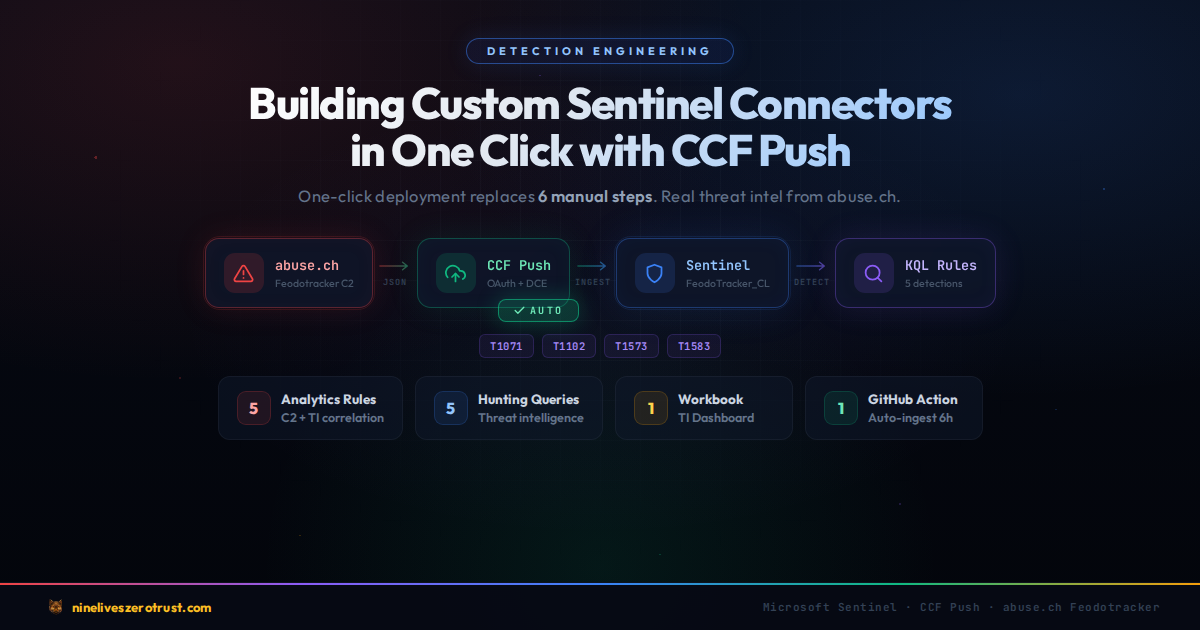

Deployment

The entire lab deploys to an existing Microsoft Sentinel workspace:

git clone https://github.com/j-dahl7/oauth-redirect-abuse-sentinel.git

cd oauth-redirect-abuse-sentinel

# Deploy detection content and run the audit

./scripts/Deploy-Lab.ps1 -ResourceGroup "rg-sentinel-lab" -WorkspaceName "law-sentinel-lab"

# Optional tenant hardening

./scripts/Deploy-Lab.ps1 -ResourceGroup "rg-sentinel-lab" -WorkspaceName "law-sentinel-lab" -ApplyHardening

The script deploys:

- 4 Sentinel analytics rules (scheduled, hourly)

- 1 Sentinel workbook (OAuth Security Dashboard)

- OAuth hardening policies only when

-ApplyHardeningis present - OAuth app audit report (CSV)

See the full lab documentation for prerequisites, testing steps, and cleanup.

Key Takeaways

- OAuth redirect abuse bypasses simple URL filtering — The link starts on

login.microsoftonline.com, which looks legitimate to users and many controls. AADSTS65001is a primary hunting signal — In Microsoft’s Entra example, the sign-in can fail and still redirect the browser to the attacker’s landing page.- Not every OAuth error means a redirect — Treat

65001and65004as the strongest browser-side signals, and use the rest as supporting context. - Restrict user consent now — The low-risk verified-publisher policy meaningfully reduces consent phishing, but you still need to review existing grants.

- Deploy CA policies for risky sessions — Step up risky sign-ins before the user reaches the malicious app flow.

- Hunt, don’t just detect — The token replay hunting query (Hunt 5) catches attacks that no single-event rule will find

Resources

- Microsoft Security Blog: OAuth Redirection Abuse (March 2, 2026)

- RFC 9700: OAuth 2.0 Security Best Current Practice — Section 4.11.2 covers “Authorization Server as Open Redirector”

- Microsoft identity platform: Authorization code flow

- Microsoft: Configure user consent settings

- Microsoft: Conditional Access for risky sign-ins

- Azure Monitor Logs reference: SigninLogs

- Companion Lab: OAuth Redirect Abuse Detection

Jerrad Dahlager, CISSP, CCSP

Cloud Security Architect · Adjunct Instructor

Marine Corps veteran and firm believer that the best security survives contact with reality.

Have thoughts on this post? I'd love to hear from you.